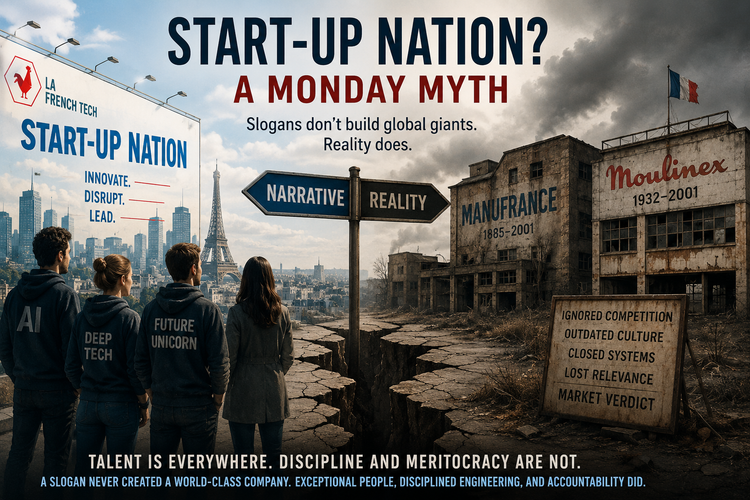

When Standards Decay, Systems Fail

We did not democratise engineering. We diluted it.

Standards did not disappear by accident. We replaced them. With noise, layers, and the illusion of competence.

And now we are surprised the system no longer holds.

Introduction

We keep talking about a talent shortage in tech, but that misses the point.

The real issue lies in the decay of standards, and the vacuum it creates.

When engineering standards erode, something replaces them. Nature does not tolerate a void. In organisations, that void fills with process, control, and opinion. Often from people who do not carry the operational accountability of what they introduce.

We then mistake substitution for progress. Activity increases. Coordination expands. Delivery slows. Systems grow more fragile. Nobody quite understands why.

Look around. A sector congratulates itself for speed while shipping fragility, rewards titles faster than competence, and labels people “engineers” before they can reason about systems, failure modes, trade-offs, or operational consequences.

This does not stay technical. It becomes systemic. Weak standards create a vacuum. The vacuum invites intervention. Intervention introduces throttling. New tools amplify the illusion of competence. The loop closes into normalised instability.

A Fragile Industry by Design

The numbers remain unforgiving. In the United States, only 34.7% of private-sector establishments born in 2013 still operated in 2023. In the information sector, the ten-year survival rate drops to 29.1%. More than seven in ten do not make it to a decade.

Source: U.S. Bureau of Labor Statistics (2024)

Many factors drive failure like strategy, timing, market shifts. Poor engineering standards often accelerate it.

The Cost of Poor Engineering

The bill for bad software is not theoretical. CISQ estimated that poor software quality cost the United States at least $2.41 trillion in 2022, with technical debt accounting for roughly $1.52 trillion.

This reflects more than bad code. It signals lost optionality, delayed change, rising risk, and organisations trapped inside systems they no longer understand.

Source: Consortium for Information & Software Quality (CISQ), 2022 Report

When systems fail, costs escalate quickly. Uptime Institute reported in 2024 that 54% of respondents saw their most recent significant outage exceed $100,000, and one in five exceeded $1 million.

Source: Uptime Institute Global Data Center Survey 2024

Standards are not aesthetic. They are economic.

At scale, this is not inefficiency. It is capital misallocation disguised as delivery.

The Disappearance of the Problem Solver

Many software practitioners once entered the field through mathematics, physics, electronics, telecommunications, or operational necessity. They understood machines, constraints, interfaces, deployment, and failure. Programming served as a tool within a broader system of thinking.

Abstraction has shifted the landscape. Cloud platforms hide infrastructure. Frameworks reduce exposure. AI accelerates code production. None of this harms by default. Problems emerge when abstraction removes understanding, not just toil.

DORA’s 2024 research highlights this tension. Higher AI adoption correlates with an estimated 1.5% decrease in delivery throughput and a 7.2% reduction in delivery stability, unless teams maintain strong engineering practices such as small batch sizes and robust testing.

Source: Google Cloud DORA Report 2024

New tools do not compensate for weak fundamentals.

Excellence Remains Rare

The industry knows what good looks like, yet few reach it.

DORA benchmarks show that elite performers deploy 182 times more frequently, achieve 8 times lower change failure rates, 127 times faster lead times, and 2,293 times faster recovery than low performers. Fewer than 20% of organisations qualify.

Source: DevOps Performance Clusters, 2024

Excellence results from discipline.

The Verification Gap in the Age of AI

AI introduces a new layer of risk when combined with weak standards.

A 2026 Sonar report found that 96% of developers do not fully trust AI-generated code, yet only 48% always verify it before committing. Additionally, 38% report that reviewing AI-generated code requires more effort than reviewing human-written code.

Source: SonarSource Press Release, 2026

Lower barriers to producing code, combined with weaker verification discipline, increase systemic risk.

The Vacuum Effect

Nature does not tolerate a vacuum. When engineering standards erode, a gap appears, not just in code quality, but in understanding, ownership, and accountability. The gap does not remain empty for long.

Non-technical stakeholders step in to fill it. Not out of malice, but out of necessity. Delivery slows, systems fail, and teams struggle to explain or fix issues with confidence. So they act, introducing processes, controls, and layers intended to restore order.

Without engineering grounding, these interventions degrade the system further. Work fragments into donkey tasking: decomposed, low-context activities that optimise coordination rather than outcomes. Decision-making grows heavier. Throughput drops while activity increases. The organisation mistakes motion for progress.

Platform thinking collapses at the same time. Instead of enabling leverage, platforms turn into bureaucratic layers, ticket queues, approval gates, compliance theatre.

Modern tooling amplifies the problem. Low-code, cloud abstraction, and AI lower the barrier to producing code. Non-technical profiles start contributing without owning production responsibilities: reliability, monitoring, rollback, security, and long-term maintenance.

The loop closes:

- Weak standards create a vacuum

- The vacuum invites unqualified intervention

- Intervention introduces fragmentation and throttling

- New tools amplify the illusion of competence

- Accountability erodes

- Instability increases

What starts as a gap becomes normalised incompetence.

The Leadership Failure Loop

The real danger extends beyond under-skilled developers. It includes under-formed leaders.

Individuals can rise through environments driven by delivery theatre, title inflation, and investor-fuelled growth without developing the judgment required to challenge weak decisions. Activity replaces progress. Visibility replaces outcomes. Conflict avoidance replaces standards.

Influence shifts toward layers that do not carry operational accountability.

The feedback loop strengthens:

- Weak standards produce fragile systems

- Fragile systems generate noise

- Noise rewards politics over engineering

- Politics promotes weak judgment

- Leadership lowers standards further

The system reinforces its own decay.

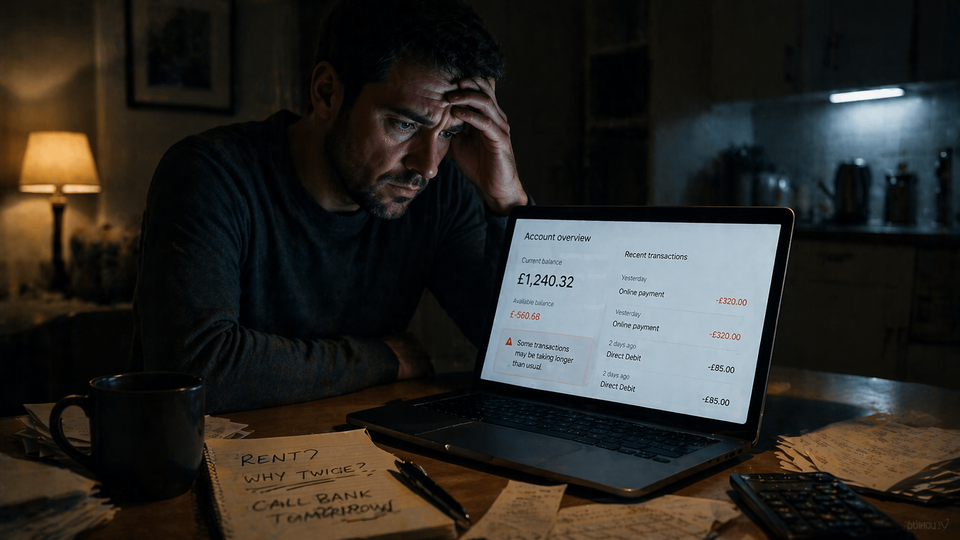

When It Stops Being Harmless

The most concerning aspect of this decay lies not in inefficiency but in dependency.

Software now underpins everyday life: payments, identity verification, logistics, healthcare pathways. Much of this dependency grew through convenience and optimisation.

Standards did not keep pace.

In low-impact environments, damage remains contained. A gaming outage frustrates users. An advertising failure costs money.

But the same patterns extend into systems that:

- process financial transactions

- validate identity and access

- support medical workflows and diagnosis

Here, failure creates exposure.

The system does not distinguish between critical and non-critical domains when standards decay. The same behaviours, poor verification, weak ownership, lack of operational discipline, propagate across both.

This is how incompetence becomes systemic. Not through dramatic failure, but through normalisation.

When a company fails, markets correct. Reputation collapses, revenue disappears, and the organisation often vanishes.

The real cost falls elsewhere. It falls on the people who trusted the system:

- the customer whose transaction fails or corrupts silently

- the individual exposed to fraud while relying on a “secure” platform

- the patient affected by an unstable diagnostic workflow

These are not edge cases. They are externalised consequences of internal decay.

Standards do not only protect systems. They protect people.

Rebuilding Standards

The opposite should have happened.

As tools improved, engineers should have expanded their scope. With AI assistance and modern platforms, they should shape clearer objectives, drive outcome-based roadmaps, and take end-to-end ownership, from idea to production reliability.

Instead, influence shifts toward layers without operational accountability. Product, middle management, and sometimes executive leadership step into the vacuum without owning production reality. Work gets defined without system understanding. Visibility gets optimised instead of outcomes.

The result remains predictable: a growing big ball of mud, entangled systems, unclear ownership, rising coordination cost, and money burning in plain sight.

This is not a people problem. It is a standards problem.

When standards remain explicit and enforced, roles align:

- Engineers own systems and production reality

- Product frames intent without hijacking execution

- Leadership enforces clarity, not noise

When standards disappear, roles blur and accountability evaporates.

Reintroducing standards does not require more process. It requires clearer expectations:

- Engineers think in systems and own outcomes

- Code remains verified, observable, and reversible

- Platforms enable rather than gatekeep

- Decisions stay grounded in reality, not opinion

Standards define survival conditions.

What To Do About It (Interventions)

If the diagnosis holds, cosmetic fixes will fail. Three interventions reset the system when applied together:

1) Re-anchor ownership in engineering (end-to-end)

- Define explicit service ownership: one team, one surface, one set of SLOs

- Make production reality visible: SLO/SLI dashboards, error budgets, incident reviews

- Tie delivery to measurable outcomes (latency, conversion, cost, reliability)

2) Collapse donkey tasking into outcome-driven flows

- Replace fragmented tickets with small, outcome-bound batches

- Enforce build–run–improve within the same team

- Remove unnecessary handoffs and approval layers

3) Turn platforms into leverage, not bureaucracy

- Provide paved roads: self-service deploy, rollback, observability, compliance

- Encode standards as code (templates, policies, guardrails)

- Measure platform success through adoption and cycle time reduction

Apply all three together. Isolation leads to regression.

Conclusion

The industry has not lost speed. It has lost standards.

Without standards, speed accelerates decay.

We did not democratise engineering. We diluted it.

I work with organisations when delivery becomes unpredictable and systems start collapsing under their own complexity, restoring ownership, standards, and operational clarity.

The question no longer asks whether the system drifts.

It asks whether correction happens before the cost becomes irreversible.

Member discussion